DevOps maturity assessment: where does your team stand?

Other

DevOps maturity assessment — where does your team stand? | Syneo

DevOps maturity assessment: DORA metrics, practical 5-level model, and 30–90-day action plan to help your team deliver value faster and more consistently.

DevOps, maturity assessment, DORA metrics, CI/CD, observability, DevSecOps, IaC, automation, MTTR, release management

March 7, 2026

DevOps is not a list of tools, nor is it a checkbox item like "CI/CD enabled." DevOps is actually a delivery capability: how quickly, stably, and predictably the team can bring value to production and then learn from it during operation.

The purpose of the "DevOps maturity assessment" is therefore not to give school grades, but to provide a clear picture of:

where are the biggest brakes in the delivery flow,

what causes incidents, rollbacks, too much manual work,

and which 3–5 interventions bring about the greatest improvement in 30–90 days.

When is it worth conducting a DevOps maturity assessment?

Typical triggers (surveys typically provide a quick ROI in these areas):

Slow release cycles, "every deployment hurts," excessive risk aversion.

Frequent critical errors, high change error rate, many hotfixes.

Operational overload, flood of tickets and alerts, low MTTR.

NIS2, ISO 27001, audits, or large corporate supplier requirements necessitate evidence (controls, traceability, logging).

You want a stable foundation before cloud migration, modernization, or legacy breakdown.

If DevOps is still in its "foundation" phase at your company, it is worth reviewing the conceptual and technical basics. In this regard, the article DevOps Basics: The Road from Zero to Production in 2026 may be useful supplementary reading.

What should we measure? DORA metrics as a common language

One of the best starting points for assessing DevOps maturity is the DORA metric system, because it specifically measures delivery and stability results. The DORA research (State of DevOps) maintained by Google Cloud has shown for years that these indicators are strongly correlated with organizational performance.

Source: DORA metrics (Google Cloud)

The 4 classic DORA indicators:

Deployment frequency: how often you deploy to production.

Lead time for changes: how much time elapses between commit (or merge) and live run.

Change failure rate: the proportion of changes that cause errors, incidents, or rollbacks.

Time to restore service (MTTR): how long it takes to restore service in the event of a failure.

In a good maturity assessment, these are not interesting in themselves, but because they point to the causes: where does the flow stop (at approvals, testing, release, infrastructure, manual steps, silos).

DevOps maturity model that works in practice

Maturity models are useful when they are not too theoretical and can be directly translated into points of intervention. The following five levels can be used effectively by many teams.

Level | Brief description | Typical symptom | Typical focus |

0. Ad hoc | All manual, heroic rescues | "Only Pisti knows how to deploy it." | Core processes and responsibilities |

1. Reproducible | There is version management, basic build | Manual release, unstable environments | CI, standard branch/PR rules |

2. Automated | CI/CD fundamentals, repeatable deployment | Tests are incomplete, lots of rework | Test strategy, pipeline quality |

3. Reliable | Observability, rollback, SLO thinking | Alarm noise, capacity issues | SRE-style operation, incident flow |

4. Optimized | Continuous development, platform/enablement | Local optima, complexity | Platform engineering, internal products |

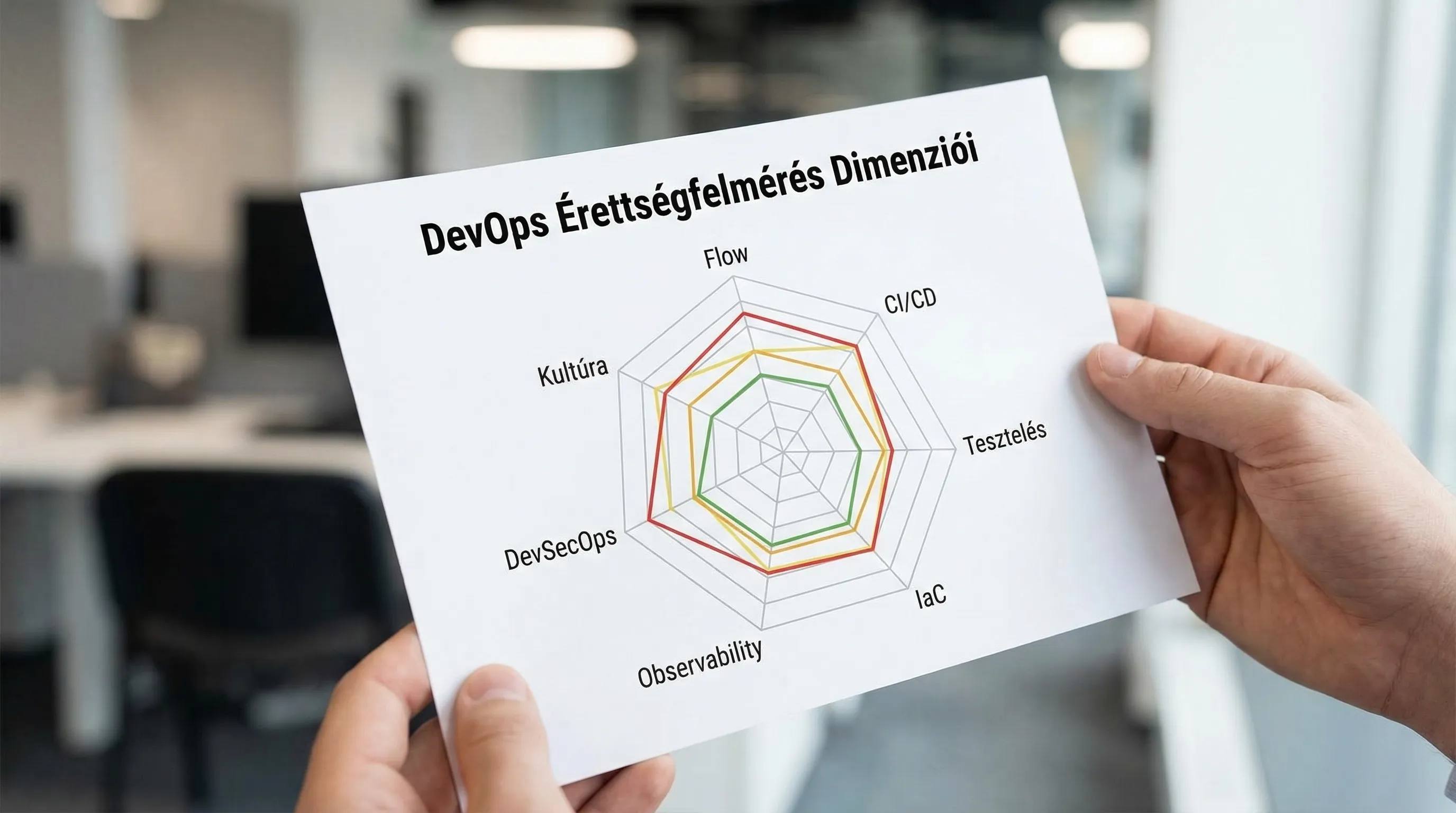

Which areas are worth examining in a survey?

DevOps is not just about development. The survey provides a meaningful picture when it looks at multiple dimensions:

Dimension | What to check | What is the tangible outcome? |

Flow and process | ticket-to-prod throughput, WIP, manual gates | bottleneck map, prioritized backlog |

CI/CD and release | build times, pipeline reliability, rollback | pipeline standards, release policy |

Testing | unit/integration/e2e ratio, flaky tests | test pyramid plan, quality gates |

Infrastructure and IaC | consistency of environments, drift | IaC baseline, environment profiles |

Observability and incidents | log/metric/trace coverage, on-call | alerting rationalization, MTTR reduction |

DevSecOps and compliance | secrets, SAST/SCA, SBOM, audit trail | control map, policy-as-code fundamentals |

Culture and operation | ownership, feedback loop, product focus | RACI/ownership clarification, operating model |

The security dimension is worth treating separately, because in many organizations this is the area where the risk and audit pressure is greatest. If you want to build this part more deeply, related material: DevSecOps in practice: how to build secure CI/CD.

What does a good DevOps maturity assessment look like, step by step?

The goal is to quickly establish a picture based on evidence. A typical, well-functioning process:

1) Clarification of objectives and scope (0.5–1 day)

This is where it is decided that the survey will not cover "everything":

Which product(s) or team(s) does this apply to?

What is the main business goal: faster release, stability, audit, cost, scaling?

What counts as success in 90 days?

Practical advice: choose 1–2 critical value streams, otherwise the assessment will be too broad and will not lead to action.

2) Data collection and artifact verification (2–5 days)

"Reality" is usually where the clues are:

Git (PRs, review times, branch strategy)

CI/CD system (pipeline runs, errors, times)

Ticketing (lead time, rework, WIP)

Incident system (MTTR, common causes)

Infra (IaC repositories, environment drift)

Security tooling (SAST/SCA, secrets, artifact policy)

At this point, it is worth trying to extract the minimum baseline of DORA metrics. The data does not have to be perfect, but approximate trends are needed.

3) Interviews and workshop (1–2 days)

The numbers tell us there is a problem, and people tell us why.

Good examples of interview subjects:

developers and tech leads

QA or test automation

platform/DevOps engineers

operation/on-call

product owner or delivery manager

security/compliance (if applicable)

The aim of the workshop: a shared understanding of bottlenecks and shared priorities for improvements. If you are interested in the governance side of predictable delivery, see the article Project Management in IT: How to Make Delivery Predictable.

4) Evaluation and scoring (1–2 days)

The scoring is not the point, but rather comparability and focus. Here is an example of a simple, understandable scale for each dimension:

Point | Report | What does this mean in practice? |

1 | Hardly exists | ad hoc, manual, non-reproducible |

2 | Yes, but unstable | The tool is available, but there are many exceptions. |

3 | It works | standardized, mostly reliable |

4 | Confident | measurement, feedback, continuous improvement |

5 | Scaled | extended to multiple teams, platform-based |

5) Action plan and roadmap (0.5–1 day)

The end result of a good survey is not a 40-page PDF, but a decision support package:

3–5 key issues and business impact (risk, cost, lead time)

recommended interventions (effort vs. impact)

30–60–90-day plan, with owners

metrics (DORA + local KPIs)

What questions does the survey consist of? (short sample)

You don't need 200 questions. 25–40 well-targeted questions are often enough. Examples that quickly reveal true maturity:

What is the lead time from merge to production, and where does most of it occur?

How long does a deployment take on average, and what is the most common cause of failure?

Is there a standard rollback, feature flag, or is every incident a "unique operation"?

Where are the manual steps in the pipeline, and why?

How common is flaky testing, and what is the policy on it?

Is there a unified log/metric/trace strategy and SLO-type goals?

How do you handle secrets, and is there auditable access?

Who owns a service during its active life cycle?

Typical "red flags" in DevOps maturity

These are not uncommon, and the good news is that they are usually treatable.

Tooling-first thinking: "let's get another system," while flow and ownership are unclear.

Manual release schedule: fixed nightly deployments, many participants, high stress.

There is no single definition of what constitutes "done" (DoD) or what counts as a release.

Lack of observability: logs exist but are not searchable; alerts exist but are not relevant.

Security after the fact: at the end of the pipeline, it turns out that the dependency is vulnerable.

What makes recovery fast? The 30–60–90 day focus

Improving DevOps maturity often does not require major reorganization, but rather a combination of a few good interventions. Typical, well-scalable focuses:

0–30 days: stable foundations and measurability

DORA baseline measurement (approximate but consistent)

standard branch/PR policy, minimum quality gates

pipeline stabilization (elimination of common errors and slowdowns)

incident review framework (blameless postmortem basics)

31–60 days: automation and risk reduction

Test strategy refinement (flaky test hunting, rapid integration tests)

release risk reduction (feature flag, canary, blue-green where appropriate)

IaC baseline (reducing environment drift)

61–90 days: scaling and operating model

service ownership and on-call schedule (meaningful rotation, runbook)

alerting rationalization (noise reduction, actionability)

Integration of DevSecOps controls on a risk basis (secrets, SCA, SBOM, signature)

How can Syneo help with this?

Syneo's focus is to support the implementation of digitization and IT projects in a way that is commercially meaningful, measurable, and auditable. In DevOps maturity assessments, this typically means that the assessment is not a "theoretical diagnosis," but rather:

common baseline with the team (measurement + interview + artifacts),

Prioritized repair backlog and 30–90 day plan,

and implementation support as needed (CI/CD, IaC, observability, DevSecOps directions).

If you want to quickly assess where you stand, it is worth briefly summarizing your starting point and goals, and then launching a focused assessment of the most critical value stream based on these.