Predictive maintenance: how to reduce machine downtime?

AI

Predictive maintenance: how to reduce machine downtime? | Syneo

A practical guide to predictive maintenance: when it is worthwhile, what data and AI methods are needed, KPIs, and a 90-day pilot plan for reducing downtime.

predictive maintenance, PdM, machine time, downtime, SCADA, CMMS, OEE, anomaly detection, data collection, AI, digitalization

February 17, 2026

Unplanned machine downtime is one of the most expensive problems in manufacturing because it simultaneously reduces capacity, delays deadlines, and often causes a domino effect (quality issues, scrap, overtime, urgent parts procurement). The goal of predictive maintenance is to reduce this unpredictability: to use data to predict when it is worth intervening, before a failure occurs, but later than preventive maintenance carried out "too early for safety."

In this article, you will find a practical, implementable framework: when it is worthwhile, what data is needed, what AI approaches work in reality, and how to measure its impact so that the project is not just a technological experiment, but a business success.

What is predictive maintenance, and how does it differ from other approaches?

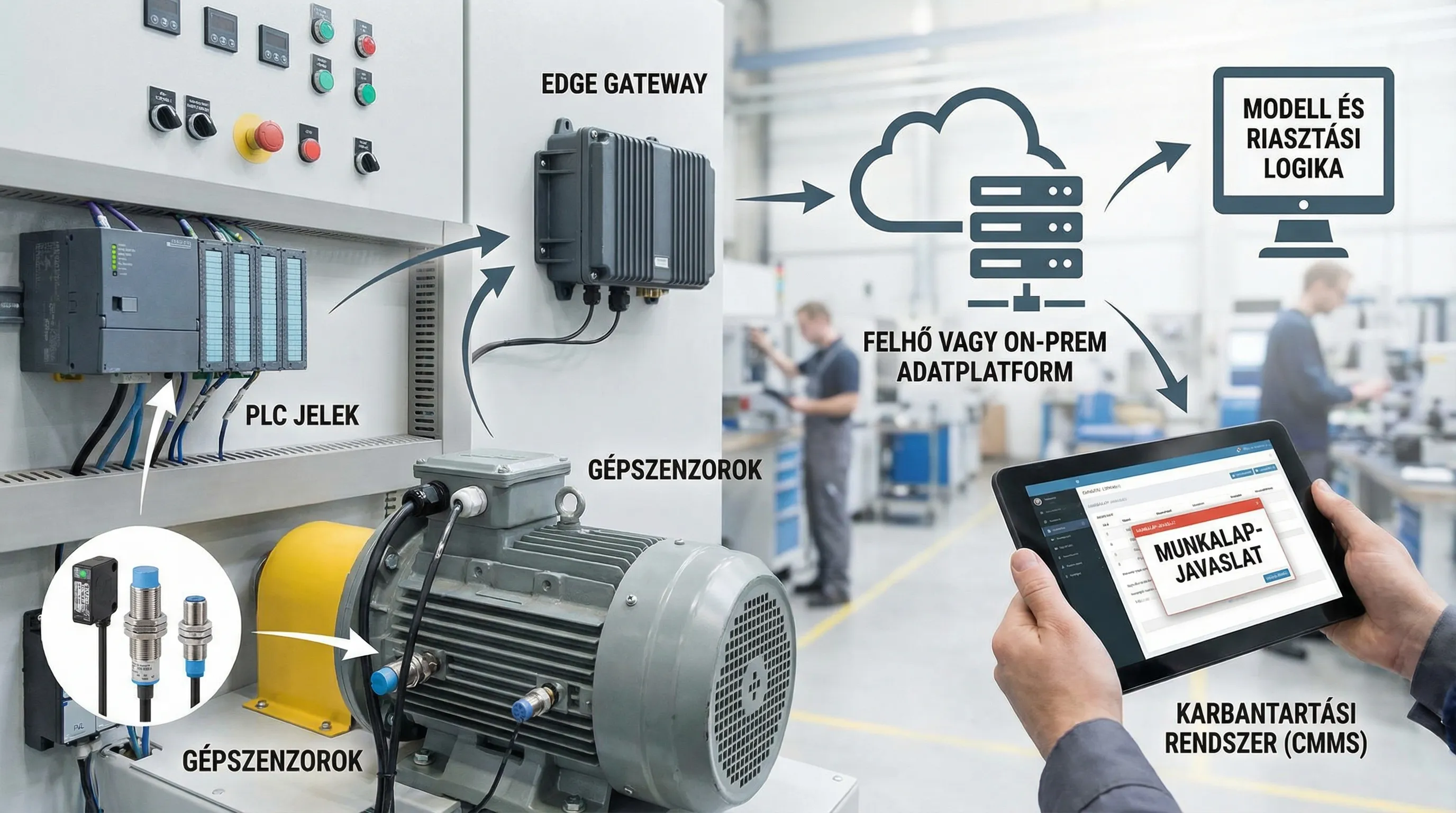

Predictive maintenance (PdM) is an advanced version of condition-based maintenance: it uses sensors and operating data to determine the condition of the equipment and then indicates the expected failure or the optimal time for intervention.

Important: PdM is not the same as "adding AI." In many cases, the best results come from a step-by-step approach: basic monitoring, then rule-based alerts, and finally machine learning where it really adds value.

Maintenance strategy | When will you intervene? | Advantage | Disadvantage | Typical data requirements |

Reactive (firefighting) | After an error | Simple, seems cheap in the short term | Long downtime, expensive repairs, unpredictable | Minimum |

Preventive (time/based) | By calendar or operating hours | More predictable | Many unnecessary replacements, yet unexpected failures can still occur | Operating hours, maintenance plan |

Condition-based | Based on measured status | More targeted intervention | Setting thresholds, alarm noise | Basic sensor data |

Predictive | Based on forecasts, at the optimal time | Fewer unplanned downtimes, better component design | Data, integration, operation complex | Sensor, PLC/SCADA, maintenance events |

When is it worth getting involved? (And when is it not?)

Predictive maintenance delivers a quick ROI when equipment failure really "hurts": it causes downtime, poses a quality risk, or is expensive to repair.

It is a good sign if several of the following apply:

There are 3–10 critical machines or machine groups that frequently cause downtime or where the hourly cost of downtime is high.

The fault is typically not immediate, but there are warning signs (vibration, temperature, current consumption, pressure, noise, lubricant condition, cycle time variation).

At least some data is available (PLC, SCADA, historical, maintenance log, scrap, OEE, quality).

There is a team that can respond to alerts (maintenance, shift management) and is willing to refine the process.

Less favorable sign:

Most faults occur randomly due to external causes (e.g., operator errors, collisions, material supply problems) and have no "physical" warning signs.

No minimum data discipline (repairs without work orders, different machine IDs, missing timestamps).

The goal is not stated in KPIs (only that "there should be AI").

Basis for reducing machine downtime: good fault image and criticality

Before choosing a sensor or model, it is worth clarifying the following in 1–2 workshops:

What is your definition of "downtime"? When does it count as micro-downtime, and when is it planned downtime?

Which 5–10 types of faults account for 60–80% of outages?

For which errors does forecasting make sense? (There are signs, and there is a window of opportunity to intervene.)

What is intervention? Replacement of parts, adjustment, lubrication, cleaning, parameterization, operator intervention?

This is critical because the ultimate goal of predictive maintenance is not to have an "accurate model," but to make better decisions sooner.

What data is needed for predictive maintenance?

At most manufacturing sites, 70% of the necessary data already exists, but it is fragmented.

1) Machine and process data (OT)

Typical sources:

PLC signals (states, counters, cycle time)

SCADA or historical time series

Built-in sensors (temperature, pressure, current)

External sensors: vibration (bearings), ultrasound (leaks), thermography (heating), acoustics

2) Maintenance events (IT / EAM / CMMS)

You don't always need years of data to "train" the model, but you do need at least a traceable reality:

worksheets (cause of fault, type of repair, part)

time and duration of intervention, condition of the machine

MTTR, parts usage

3) Production and quality data

Many malfunctions are not initially visible on the sensor, but rather in performance:

OEE components (Availability, Performance, Quality)

rejects, rework, quality deviations

cycle time variance, micro-stops

4) Context (without which the model will lead you astray)

shift, product variant, recipe

environmental conditions (temperature, humidity)

settings, tool change

How does AI work in practice? Three proven approaches

Predictive maintenance is typically not a single model, but a combination of several methods.

Anomaly detection (if there are few tagged errors)

If errors are rare (which is good), then the classic "teach by error" approach is difficult. In such cases, it works well if the system learns normal operation and alerts you when the signal pattern deviates from this.

The key to success is that the alert should not just be a "red light," but should provide context:

which sensor deviates

since

how critical

under what operating conditions it occurred

RUL and trend-based prediction (if there is gradual deterioration)

Bearings, belts, lubrication problems, and overheating often show a clear deterioration trend. In such cases, the goal is to estimate the remaining useful life or an "intervention window."

Probability of error (if there are recurring patterns)

If certain types of errors can be clearly distinguished, classification can be used: estimate the probability of an error occurring in the next X hours based on a given signal pattern + circumstances.

The real value: integration into daily operations (CMMS, ERP, warehouse)

Predictive maintenance most often fails when there is a dashboard, but no decision-making and no execution.

The working chain usually looks like this:

Perception (sensor, historian)

Interpretation (rule or model)

Decision (priority, risk)

Implementation (worksheet, schedule)

Feedback (what was the result of the repair)

If you have a CMMS/EAM or ERP maintenance module, the goal is to turn the alert into a work order proposal, not just an email.

Scalability also requires "AI operation": versioned models, monitoring, and alarm quality measurement. This is where DevOps-oriented solutions and continuous monitoring come in handy (related topic: DevOps solutions in 2026).

KPIs: how to prove that machine downtime has actually decreased?

The most important rule: without a baseline, there is no return on investment. The evaluation of PdM projects is accurate if you record the initial status in advance (e.g., 8 weeks) and then measure the same indicators after the pilot.

KPI | What does it measure? | Why is it important in predictive maintenance? | Typical data source |

Unplanned downtime (hours/month) | Total unexpected downtime | This is the direct target indicator. | SCADA, OEE, production log |

MTBF | Average time between two faults | You have to grow up if you want to prevent mistakes. | CMMS, maintenance log |

MTTR | Average repair time | May decrease due to improved diagnosis and parts availability | CMMS, shift report |

OEE Availability | Availability | Summarizes the impact of downtime | OEE system |

Alarm "usefulness" | How many alarms resulted in actual intervention? | Avoiding alarm fatigue | PdM system + CMMS |

Tip: In the pilot, it is worth measuring the "prevention rate" estimate separately, for example, based on cases validated by maintenance (because many other factors also influence the overall OEE).

90-day, risk-reduced implementation plan (pilot)

Many organizations don't get started because it seems like "too big a project." Predictive maintenance, however, can be piloted well if the focus is narrow.

0–30 days: focus and data reality

Critical equipment list, top error types

Downtime definition and baseline measurement

Mapping data sources (PLC, historical, CMMS)

Minimal data model (machine ID, time, status, event)

31–60 days: instrumentation and first alarms

Sensing of 1–3 devices, if necessary (vibration, heat, current)

Stabilization of data collection (time synchronization, missing data)

Rule-based thresholds and simple trends

Alert workflow coordination with maintenance

61–90 days: model, integration, measurement

Anomaly detection or targeted prediction

Linking alarms to workflows

Pilot KPI evaluation, scaling plan

If the organization is still in its early stages, it is worth building PdM on broader digitalization foundations. A useful framework for this is a step-by-step approach to corporate digitalization.

Common pitfalls that prevent downtime from decreasing

“AI first, data later”

If the signal quality is poor, the labeling is incomplete, or the machine identification is inconsistent, the model will not function reliably. In such cases, the data and integration foundations must first be put in order.

Alarm fatigue

Too many poorly prioritized alerts will cause the team to become immune. The number and accuracy of alerts should be measured in the same way as downtime.

No operations manager and no routine

PdM is not a one-time development. It requires an owner (operations or reliability engineer) whose job is to improve alarm quality and provide feedback.

Cybersecurity in an OT environment

In the case of sensors, gateways, and cloud data connections, it is mandatory to clarify security fundamentals (segmentation, authorization management, logging). In an industrial environment, NIST SP 800-82 (ICS security) recommendations are a good starting point.

Real-life example: what does this look like in practice?

Predictive maintenance is typically most effective when it is part of a digitalization program: data integration, sensor technology, and the incorporation of results into daily processes.

In one of Syneo's case studies, an approach based on a sensor network, ERP, and machine learning resulted in a 35% reduction in machine downtime at a manufacturing company after implementation (details: Case study: digitization and AI integration).

How can Syneo help?

If your goal is to reduce machine downtime, the fastest route is usually a narrowly focused assessment + 90-day pilot, in which we clarify critical assets, data flow, alerting processes, and metrics together. Predictive maintenance is often also an integration issue (CMMS/ERP/SCADA), so coordination between IT and operations is just as important in implementation.

If you are currently planning your next step, check out when it is worth involving an external expert: IT consulting: when is it necessary and what do you get in return?

Good predictive maintenance is ultimately not an "AI project," but a reliability system: data, process, responsibility, and continuous fine-tuning. When these elements come together, downtime does not disappear, but it becomes predictable and manageable, and this is what will give most plants a real competitive advantage in 2026.