AI pilot in 30 days: use case, data, KPIs, risks

AI

AI pilot in 30 days: use case, data, KPIs, and risks | Syneo

A quick, practical guide to 30-day AI pilots: use case selection, minimum data package, KPIs, risks, and weekly deliverables for go/no-go decisions.

AI, pilot, use case, KPI, data, data quality, risk management, LLM, RAG, pilot plan

March 2, 2026

An AI pilot is worthwhile if, after 30 days, it provides management with not just a demo, but a decision (go/no-go), measurable business impact, and a scalable plan. However, this requires disciplined scope, sufficient data, well-defined KPIs, and conscious risk management. Most pilots fail because the use case is too broad, there is no baseline, or it is unclear what constitutes success.

The framework below is intended for companies that want to try out AI quickly but in a controlled manner in 2026, whether it be LLM-based automation or classic machine learning.

What can be accomplished in 30 days (and what cannot)

The "30-day AI pilot" is typically a validation project, not a complete product.

Pilot objective: to verify business value using metrics, check technical feasibility, and identify risks.

Not a goal: full integration with all systems, 100 percent automation, coverage of all edge cases, organizational restructuring.

If, at the end of the pilot, you see that the solution generates value, then comes the second phase: stabilization, scaling, governance, and operating model.

Related thoughts: pilot logic appears in several Syneo articles, such as the article on the starting points for digitalization in 2026.

1) Use case selection: the fastest route to measurable results

The best 30-day pilot use case at once:

pain (there are tangible costs, delays, SLA violations, error rates)

measurable (data and baseline available)

manageable risk (GDPR, business operations, security)

can be narrowed down (1 process step, 1 channel, 1 site, 1 product family)

Quick scoring: business value vs. feasibility vs. risk

The simple scoring system below helps to prioritize pilot-ready use cases over "cool ideas."

Consideration | What should you examine? | Example of a green signal | Example of a red signal |

Business value | How much time/money/loss depends on it? | Many repetitive working hours, measurable downtime | "It would be nice if..." type of goal |

Data access | Do you have access to 1-2 data sources within 1 week? | API, export, logs, ticket system | "We will ask the supplier." |

Data quality | Good enough for the pilot? | structured fields, consistent identifiers | disjointed master data, missing keys |

Operational implementability | Who uses it, where does it run, how is it controlled? | shadow mode, human approval | automatic decision in a critical process |

Legal and compliance | Does it involve sensitive data or high-risk categories? | can be anonymized, limited circle | special data, non-transparent decision |

If you are looking for manufacturing-focused ideas, check out this article for inspiration: AI in manufacturing: 6 use cases that quickly pay for themselves.

2) Data: for the "minimum viable data package" pilot

It is rarely possible to build a large data platform in 30 days. However, it is possible to develop a pilot data package: exactly the amount of data needed to measure KPIs and validate the model.

Pilot data package: what should it contain?

Element | Why is it necessary? | Typical question |

List of data sources | transparency and quick access | CRM? ERP? Ticket system? SharePoint? |

Data owner | decisions, entitlements, definitions | Who decides what constitutes a "closed case"? |

Minimum fields | scope and duration control | Do you really need all the fields? |

Time window | baseline and seasonal effect management | Is 3-6 months enough? |

Identifiers | interoperability | Is there a common client or case number? |

Data protection approach | GDPR and internal rules | Is pseudonymization necessary? |

Quality rules | rapid detection of errors | duplication, missing values, outlier |

If the quality of the data is questionable, it is worth focusing on this specifically: Data quality audit: why AI projects fail.

LLM (RAG) pilot: the knowledge base is your "teaching data"

Many 30-day projects are based on LLM-based search and answer generation (RAG). Here, the data is not just a table, but a document:

which documents are considered "truth" (policy, product documentation, internal procedures)

version management and updates (who is responsible for the content)

permissions (who can see what)

A good example for building a pilot in customer service: AI-based customer service: SLA improvement in 30 days.

3) KPI and baseline: how the pilot becomes a business decision

The most important artifact of the pilot is not the model, but the measurement plan. Without a baseline, there is no "before and after," and the pilot becomes a competition of opinions.

KPI planning rule: 2-3 main KPIs, plus 2-3 guardrails

Key KPI: showing business value (time, cost, quality, revenue).

Guardrail KPI: prevents improvement from having side effects (error rate, complaints, security incidents, compliance deviations).

Pilot type | Key KPI examples | Guardrail examples |

Customer service automation | first response time, resolution time, self-service ratio | reopening, escalation rate, CSAT |

Document processing, OCR/NLP | throughput time, touchless ratio | incorrect extraction rate, manual correction |

Prediction (e.g., maintenance) | downtime reduction proxy, prediction accuracy | false positive rate, alarm load |

Sales support | lead response time, time to quote | incorrect offer ratio, compliance checks |

When formulating KPIs, it is useful to decide during the pilot phase where the actual data will come from (log, ERP item, ticket status, BI metric). In the case of integration logic, the background is system integration between ERP, CRM, and BI.

Measurement technology that fits into 30 days

The fastest and safest solution for most companies:

shadow mode: AI makes suggestions, but the process is still carried out by humans

sampling: specific types of cases, product groups, or locations

Predefined acceptance criteria: e.g., "at least X percent time savings and an error rate below Y."

4) Risks: what needs to be priced in before the pilot

Fast piloting does not mean "uncontrolled AI." The goal is to make risks visible, manageable, and mitigable.

Typical risk categories

Data protection and authorization: personal data, access levels, logging.

Security: key and secret management, supplier risk, model output misuse.

Quality and reliability: hallucinations in LLM, drift in ML, edge cases.

Operation: who approves, what is the fallback, what is the error event sequence.

Legal and compliance: EU AI Act classification, internal regulations, auditability.

At EU level, a good starting point for context is the European Commission's AI Act summary page (not legal advice, but rather a source of information).

Pilot risk register template

Risk | Effect | Probability | Mitigation (in pilot phase) | Owner |

PII leaks in prompts/logs | high | medium | data filtering, pseudonymization, logging rules | DPO/IT |

LLM hallucination with false information | medium-high | medium | RAG source references, human approval, blacklists | process manager |

Bad baseline, mismeasured effect | high | medium | measurement plan, recording definitions, control group | BI/controlling |

Access blocked (supplier, ERP) | medium | high | export fallback, scope narrowing, priority for accessible resources | IT |

Lack of business adoption | medium | medium | 1-2 key users, training, short feedback cycle | leader |

The DevSecOps approach can be useful in relation to security implementation practices: DevSecOps in practice: how to build secure CI/CD.

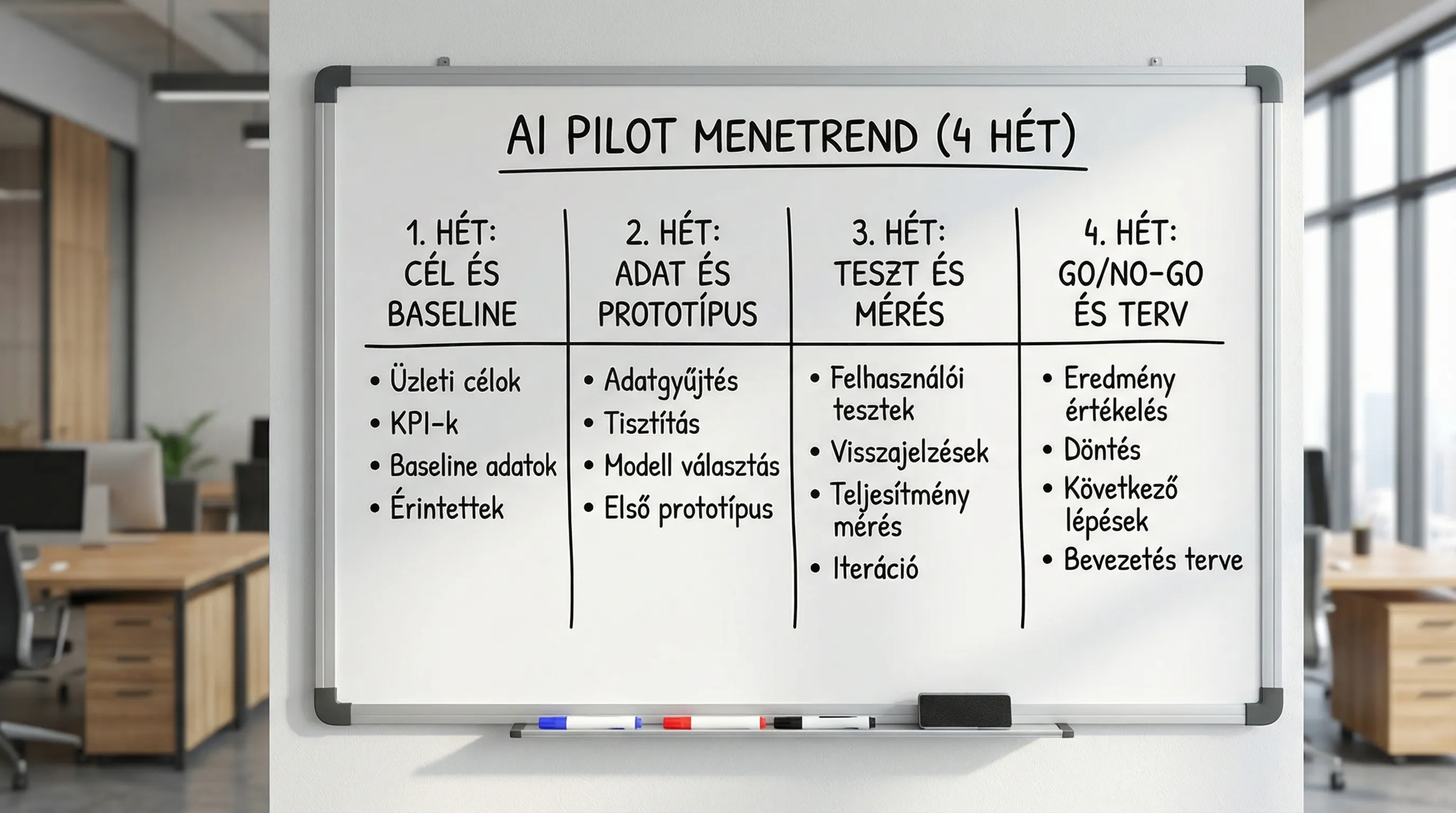

5) 30-day plan: tangible deliverables every week

The following schedule works if decision-makers commit to quick approvals and there is a dedicated business owner.

Seven | Focus | Minimum deliverable | A typical trap |

Week 1 | Use case, scope, baseline | 1-page pilot charter, KPI definitions, list of data sources | too broad a scope, "everything" |

Week 2 | Data package, prototype | pilot dataset, first model/RAG prototype, accesses OK | lack of data controller, prolonged authorization |

Week 3 | Testing, measurement, fine-tuning | measurement report v1, list of error types, guardrail check | only "demo" available, no metrics |

Week 4 | Controlled introduction, decision | pilot results report, risk register update, go/no-go and roadmap | no decision criteria, political debate |

Methodological background related to the structure of the "pilot charter" and the formalization of KPIs/risks: planning a digitization project: goals, KPIs, risks.

6) Technical decisions that matter in the pilot

It is not worth building a prototype in 30 days if you have to rewrite everything later. Some decisions are specifically "pilot-compatible" but can also be defended in the longer term.

LLM: RAG vs. fine-tuning

In most corporate pilots, RAG is faster, cheaper, and more auditable because the answer can be substantiated through the corporate knowledge base. Fine-tuning is necessary when:

highly specific language (regulations, product catalog, coded abbreviations)

many examples of "good answers" and consistent labeling

Integration: minimal is enough, but it must be clear

A common solution in pilot projects is read-only integration (data querying and reporting), with two-way automation coming later. This reduces risk and speeds up go-live.

Operation and monitoring

There should already be answers to these in the pilot:

where it runs (cloud, on-premises, hybrid)

how we log (audit, debugging)

what is the shutdown and recovery plan

If you plan to use AI as an agent in the long term (multiple steps, use of tools), it is worth preparing for agent-specific risks: AI Agent Creation 2026.

7) What makes the pilot a "success" on day 30?

Closing the pilot is a management decision point. It is successful if the team can clearly answer:

How much impact did we measure compared to the baseline?

How stable and secure is the solution within the pilot scope?

What is needed for scaling (data, integration, change management, costs)?

Go/No-go criteria (in practice)

It's best to record it in advance:

minimum expected KPI improvement

maximum permissible error rate or escalation

acceptable risk level and list of outstanding risks

estimated scaling costs and time (order of magnitude, not "exact to the penny")

If the pilot is valuable, the next step is usually a 6–12-week "industrialization" phase (integrations, roles, permissions, monitoring, training, SLAs).

How Syneo can help (quickly, with scope control)

Syneo focuses on implementing corporate digitalization and AI solutions with projects where measurability (KPI), data, and risk management are built in from the outset. If you are planning a 30-day AI pilot, the most common entry points for support are:

use case selection and pilot charter compilation

Quick review of data access and data quality

Developing KPIs, baselines, and measurement plans

controlled pilot implementation and go/no-go decision material

If you want to clarify which use case is "pilot-ready" first, a good starting point might be the broader framework: introducing artificial intelligence: frequently asked questions.